We measured every person at our company on AI. Here's what we learned.

We scored every employee across six dimensions of AI capability. The full framework, the surprising findings, and a self-assessment prompt you can run with your own team.

Driving AI usage is table stakes. The harder question is whether any of it is actually compounding beyond the individual. We interviewed every person at our company, scored everyone across six dimensions, and built individual growth profiles. The biggest findings: technical background barely predicted success, the traits that mattered were operational instinct and willingness to sit with discomfort, and sharing was the weakest dimension in the entire company. Below: the full framework, what we found, and a self-assessment prompt you can run with your own team.

Why we did this

Every company right now is trying to get their people to use AI - and they're pulling every lever to do it. Friendly (or not-so-friendly) competitions, leaderboards, demo days, show-and-tells. Some are even optimizing "tokenmaxxing." Driving AI usage is table stakes. You have to build the muscle. But once usage is climbing, how do you actually know if any of this is making the organization better?

There's no product on the market that answers this. AI vendors can tell you who's active, but they can't tell you whether that activity is compounding beyond the individual. They can't tell you whether the person building impressive automations has shared any of it with a single colleague. They can't tell you whether teams are building duplicate tools because nobody knows what already exists. They can't tell you whether the work that gets built ever reaches the people it was built for.

When we turned the lens inward at Tribe AI, we realized we had the same blind spot as everyone else: strong individual capability, no way to measure whether it was compounding beyond the person who built it. So we decided to measure it. The numbers were stark: 63% of people who built working AI tools had never deployed anything beyond their own laptop. 73% of tools built across the company were never used by anyone other than the person who built them.

The tools were there. The talent was there. The connective tissue wasn't. So our internal AI enablement dedicated team designed and ran the entire process to find out why.

What follows is what we built to measure organizational AI capability, what we learned, and what we're doing about it.

The tools were there. The talent was there. The connective tissue wasn't.

How we did it

The process had three steps.

First, everyone ran a self-assessment. We built a prompt that people dropped into Claude or ChatGPT - wherever they had the most conversation history. Some ran it in Claude Code or Codex as well, where they do most of their building. The prompt asked the AI to scan that person's chat history and assess them against six capabilities, using only concrete evidence from actual conversations. No vibes. No self-reported surveys. If the AI couldn't find evidence of a capability, it said so.

The prompt is included at the end of this post. You can run it with your own team.

Second, one-on-one interviews. The self-assessment gave us a starting point, but it only captured what showed up in chat history. The interviews went deeper: what have you built? What's sitting unfinished on your laptop? What have you shared with anyone else? What's blocking you? These weren't performance conversations. They were diagnostic - designed to understand how people actually work with AI, not to evaluate whether they're working hard enough.

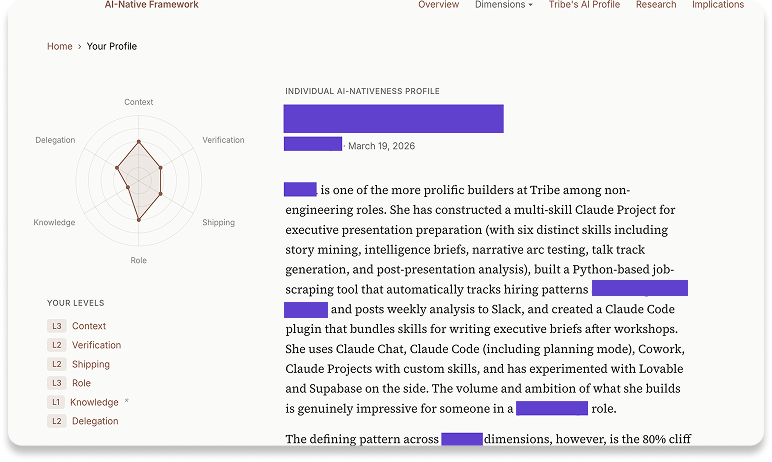

Third, we scored everyone across six dimensions of AI capability. The dimensions weren't designed top-down. We started with 764 distinct observations from the interviews, consolidated and clustered them through multiple rounds of analysis, and let the framework emerge from what people actually do, not from a hypothesis about what they should do. The process was deliberately structured to prevent a specific failure mode: AI-assisted analysis tends to smooth out the surprising, messy, contradictory stuff that makes qualitative data valuable. So reasoning was written before scores to prevent anchoring, and each transcript was analyzed independently before any cross-cutting patterns were drawn. The full methodology is published here separately for anyone who wants the details.

From there, we built individual growth profiles. Each person got a private page showing where they sit on each dimension, with specific recommendations for how to reach the next level. This is a company where everyone is expected to be growing their AI capability - the profiles were designed to make that growth visible and self-directed, not managed from the top. Leadership has visibility into where the organization's gaps cluster - you have to, in order to know where to invest - but individual profiles are private. Everyone chose what to focus on next. Nobody was publicly ranked against anyone else.

The six dimensions

We scored people across six dimensions. Together they answer a question that seat counts and usage dashboards can't: is someone's AI capability actually producing organizational value, or just individual output?

These six dimensions aren't a maturity model in the traditional sense. There's no expectation that everyone reaches the top level on every dimension. A CFO who operates at the highest level of delegation calibration but never builds a tool has made a legitimate choice. The framework is diagnostic, not prescriptive - it shows where people are and where the organization's gaps cluster. For the full five-level definitions and methodology, we've published the complete framework [Defining AI-Native Work at Tribe AI →].

What we found

The findings challenged assumptions we didn't realize we were holding.

Technical background barely mattered

Going in, the team expected engineers and technical PMs to be furthest ahead. They weren't - at least not uniformly. Some of the highest AI fluency in the company came from people with no engineering experience at all. One was a former personal trainer who'd never written code. He got obsessed. Two weeks after starting: 14 custom skills and 7 MCP integrations. Another, with no technical background, needed a dashboard and didn't want to wait for engineering to build it. She screenshotted a colleague's UI, pulled a product spec into Claude, layered in her own CSV data, and built a working version herself. She now runs SQL queries against 183,000+ records through a project she set up on her own.

These aren't anomalies. Across the full dataset, technical background was a weak predictor of who was thriving. What mattered more was whether someone could see their own work clearly enough to know what to hand off to AI — and that turned out to be an operational skill, not a technical one.

Technical background was a weak predictor of who was thriving. What mattered more was whether someone could see their own work clearly enough to know what to hand off to AI - and that turned out to be an operational skill, not a technical one.

Two traits predicted success better than speed, technical background, or enthusiasm.

Across every function and seniority level, the same two patterns kept surfacing.

- Operational instinct - the habit of noticing when a process has been repeated three times and asking whether it could be better. The people who thrived weren't the most technically gifted. They were the ones who could see their own work clearly enough to separate the judgment from the execution - and automate the execution while staying close to the judgment. One person mapped an entire workflow and turned it into four separate skills before touching anything. Another noticed she was copy-pasting the same context into every AI session and built a persistent environment that eliminated it entirely.

- Willingness to sit with discomfort - patience with the early friction of learning something new, long enough for it to click. The people who gave up after one frustrating session stayed at the starting line. The people who carved out time and sat with it for a week broke through. One person had never written a line of code. He watched a colleague build something, recognized what was possible, and committed. Two weeks later: 14 custom skills and 7 integrations. This isn't curiosity in the abstract. It's the specific tolerance for feeling incompetent at something you know will eventually pay off.

One practical finding reinforced both traits: voice turned out to be the largest non-technical unlock. Five minutes of talking produces roughly 10x more useful context than three typed sentences. The people who discovered this — dictating workflows, narrating their thinking, talking through problems out loud before typing anything — ramped faster and produced richer AI interactions. The value isn't the technology. It's the context density that speech naturally creates.

Five minutes of talking produces roughly 10x more useful context than three typed sentences. The people who discovered this - dictating workflows, narrating their thinking, talking through problems out loud before typing anything — ramped faster and produced richer AI interactions

Sharing was the weakest dimension in the company

This was the finding the team didn't see coming. Tribe's people are prolific builders. They're also, overwhelmingly, solo builders. The data was stark: 75% of people whose work was referenced by colleagues as helpful had their work in locations that couldn't be discovered through any directory or search. The most impressive work in the company was sitting on individual laptops, in personal Claude projects, in folders nobody else knew existed. At least two people had independently built the same tool without knowing about each other.

One person's work was cited as influential by nine different colleagues — yet none of that knowledge existed anywhere discoverable. It spread through hallway conversation and imitation, not through anything the next hire could find.The instinct was to call this a "reluctance to share" problem. It wasn't. When the team dug in, they found six distinct barriers — each requiring a different intervention:

- Perfectionism – "It's not ready yet." The most common barrier among the strongest builders. That threshold never arrives, so nothing ships.

- Imposter syndrome – "My work isn't as good as what others are building." Particularly common among people who underestimated how far ahead they actually were.

- Habit gap – People build, finish, move on. There's no step in the workflow that triggers sharing. They just forget.

- Structural barriers – There's nowhere to discover what exists. No directory, no registry, no way to find out someone solved the same problem last month.

- Broadcast skepticism – Some people don't believe a Slack post actually transfers knowledge. They're not entirely wrong - but sharing nothing is worse.

- Philosophical belief – "My workflow is too personal to be useful." This one requires counter-evidence from peers, not a pep talk.

Most companies try to solve knowledge sharing with a single intervention - a Slack channel, a demo day, a wiki. That addresses maybe one of the six.

The 80% cliff: why most AI tools never reach their intended audience

The team started calling this "the 80% cliff" because of how consistently it appeared. A striking number of people had working prototypes, functional tools, and personal automations that had never been deployed, delivered, or put in front of their intended audience. The abandonment point was remarkably consistent: right at the transition from "this works for me" to "this works for someone else."

The blockers were specific and distinguishable. Perfectionism was the most common. Missing deployment knowledge was the most tractable - a single pairing session closes it permanently. Time scarcity was the most cited but often the least honest — many people had time to start three new projects but not to finish one.

The relationship between the sharing problem and the shipping problem became one of the clearest findings in the research: they're the same problem. People who won't finish also won't share, for the same reasons. Perfectionism kills both.

The sharing problem and the shipping problem are the same problem. People who don't finish also won't share, for the same reasons. Perfectionism kills both.

What we're doing about it

Research that doesn't change behavior is just content. We think of Tribe as the lab for the transformation practices we bring to clients - so moving beyond the research into meaningful outcomes internally isn't optional.

The findings shaped one of the team's critical Q2 priorities: closing three specific gaps - what they're calling the three cliffs. Each one blocks the next: you can't share what you haven't deployed, and you can't deploy what you haven't built.

Beyond the cliffs, a few structural changes:

- The research was clear on one thing: presentations don't change behavior. Structured practice does. The team replaced recurring bi-weekly AI office hours with two formats. Pairing sessions - one-on-one, practitioner to practitioner - targeted at the three cliffs. And hands-on workshops covering GitHub basics, persistent context setup, verification design, and skills discovery. One hour each. Run by practitioners. You leave having built something, not having watched someone present about it.

- Every department is getting a prioritized AI initiatives list - built collaboratively, not top-down. The goal is to take work people are doing individually and make it shared and standardized across similar roles.

- The team is building knowledge infrastructure: a seeded and interactive internal knowledge base, a shared data layer so people stop configuring individual connectors, and skills sharing infrastructure so useful tools reach people passively rather than requiring them to go looking.

Our internal estimate, derived from anchor examples across the individual profiles: each level increase on a single dimension adds roughly 12% to a person's effective capacity. The dimensions compound. One level up across all six roughly doubles someone's output. The target for the quarter is closing the gaps that prevent that compounding - not by working more hours, but by making the work people are already doing extend beyond the individual who built it.

Each level increase on a single dimension adds roughly 12% to a person's effective capacity. The dimensions compound. One level up across all six roughly doubles someone's output.

How to run this yourself

If you want to run a version of this at your own company, here's where to start.

Start with the self-assessment. The prompt below is adapted from what we used internally. Have each person drop it into whatever AI tool they use most - Claude, ChatGPT, Claude Code, Codex - wherever they have the deepest conversation history.

Follow it with one-on-one conversations. The self-assessment captures what shows up in chat history. It misses everything else. A 30-45 minute conversation per person fills in what the prompt can't see. The questions that produced the most useful signal for us:What have you built that nobody else knows about? What's sitting unfinished? What would you build if you had protected time?

Score against the dimensions, but don't publicly rank.When people feel ranked, they optimize for the metric instead of actually growing. When they feel diagnosed, they engage. The distinction matters.

Design the interventions around what you find, not what you assumed.If people aren't sharing because of perfectionism, a Slack channel won't help. If they aren't building because they've never deployed, a training session on prompt engineering is solving the wrong problem. The diagnostic has to come before the prescription.

In closing

The question most companies are asking is "are people using AI?" That's the wrong question. Usage is a given at this point. The better question is: is the knowledge compounding?

Is what one person learns available to the next person who needs it? Is what gets built reaching the people it was built for? Is individual capability becoming organizational capability - or is it trapped in personal folders, on individual laptops, in projects nobody else can see?

When we ran this process internally, the honest answer to most of those questions was no. Not because people weren't capable. Because nothing in the organization was designed to make individual skill compound.

That's what this research was designed to surface. And once you can see where the gaps are, you can design specific interventions for each one - instead of running another all-hands training and hoping something sticks.

One thing we're upfront about: working at Tribe means being an AI expert. This research isn't optional enrichment. It's how we hold ourselves accountable to that standard - and how we make sure everyone has a real path to get there, regardless of where they start.

We've published the complete framework - including five-level definitions for each dimension and the full methodology - at Defining AI-Native Work at Tribe AI.

If any of this resonates with how your organization is navigating AI adoption, we're happy to share more about how we approached it.